~/vqsp/vqsp_env/lib/python3.6/site-packages/sklearn/utils/validation.py in check_X_y(X, y, accept_sparse, accept_large_sparse, dtype, order, copy, force_all_finite, ensure_2d, allow_nd, multi_output, ensure_min_samples, ensure_min_features, y_numeric, warn_on_dtype, estimator)ħ17 ensure_min_features=ensure_min_features,ħ21 y = check_array(y, 'csr', force_all_finite=True, ensure_2d=False, > 463 y_numeric=True, multi_output=True)Ĥ65 if sample_weight is not None and np.atleast_1d(sample_weight).ndim > 1: ~/vqsp/vqsp_env/lib/python3.6/site-packages/sklearn/linear_model/base.py in fit(self, X, y, sample_weight)Ĥ62 X, y = check_X_y(X, y, accept_sparse=, ~/vqsp/vqsp_env/lib/python3.6/site-packages/autograd/wrap_util.py in unary_f(x)ġ4 subargs = subvals(args, zip(argnum, x))Ħ2 test_mse = mean_squared_error(pred, y_test)

~/vqsp/vqsp_env/lib/python3.6/site-packages/autograd/tracer.py in trace(start_node, fun, x)ġ1 if isbox(end_box) and end_box._trace = start_box._trace: > 10 end_value, end_node = trace(start_node, fun, x) ~/vqsp/vqsp_env/lib/python3.6/site-packages/autograd/core.py in make_vjp(fun, x) The gradient has the same type as the argument."""Ģ7 raise TypeError("Grad only applies to real scalar-output functions. The function `fun`Ģ4 should be scalar-valued. ~/vqsp/vqsp_env/lib/python3.6/site-packages/autograd/differential_operators.py in grad(fun, x)Ģ3 arguments as `fun`, but returns the gradient instead. > 20 return unary_operator(unary_f, x, *nary_op_args, **nary_op_kwargs) ~/vqsp/vqsp_env/lib/python3.6/site-packages/autograd/wrap_util.py in nary_f(*args, **kwargs) > 88 g = ad(objective_fn)(x) # pylint: disable=no-value-for-parameter ~/vqsp/vqsp_env/lib/python3.6/site-packages/pennylane/optimize/gradient_descent.py in compute_grad(objective_fn, x, grad_fn) > 64 g = pute_grad(objective_fn, x, grad_fn=grad_fn) ~/vqsp/vqsp_env/lib/python3.6/site-packages/pennylane/optimize/gradient_descent.py in step(self, objective_fn, x, grad_fn) ValueError Traceback (most recent call last) Plt.scatter(X_test, y_test, color='b',s=1) Plt.scatter(X_train, y_train,color='g', s=1)

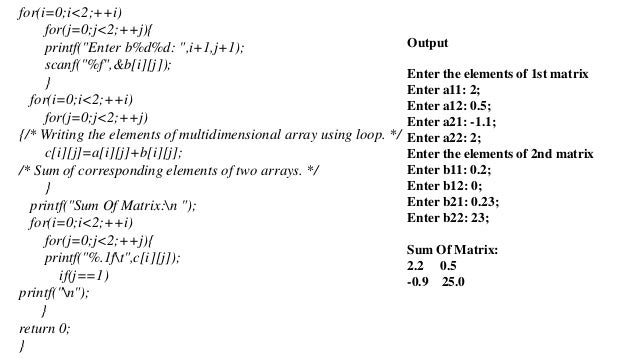

Print("cur train RMSE:" +str(np.sqrt(train_mse))) Train_mse = mean_squared_error(train_pred,y_train) Print("cur test RMSE: "+str(np.sqrt(test_mse))) Test_mse = mean_squared_error(pred, y_test) Test_data = collect_rand_sample(0.1,100,params) Train_data = collect_grid_sample(0.1,100,params) Phis = np.random.rand(n_sample)*phi_range Res = np.concatenate((res,cur_zero_prob))ĭata = np.concatenate((phis.reshape(n_sample, 1),res.reshape(-1,1)),axis=1)ĭef collect_rand_sample(phi_range, n_sample, params): Phis = np.arange(0,phi_range,phi_range/n_sample)Ĭur_zero_prob = measure_full_sys(cur_phi, params).reshape(1) Import matplotlib.pyplot as measure_full_sys(phi, params):ĭef collect_grid_sample(phi_range, n_sample, params): Wanted to ask whether this is supposed to be supported? Below is my code: import pennylane as qmlĭev = qml.device("default.qubit", analytic=True, wires=n_probes + n_ancillas)įrom sklearn.linear_model import LinearRegressionįrom trics import mean_squared_error eye (size ) 51 52 # Return the dimensions of the matrix.Hello! I am trying to use sklearn regression within my cost function, but that seems to give an error when I call clf.fit (clf is the sklearn regressor). 49 def identity (self, size ) : -> 50 return numpy. _init_ ( ) 45 46 def name (self ) : /Users/mohameddaoudi/opt/anaconda2/lib/python2.7/site-packages/cvxpy/interface/numpy_interface/ndarray_interface.pyc in const_to_matrix (self, value, convert_scalars) 48 # Return an identity matrix. is_matrix ( ) : /Users/mohameddaoudi/opt/anaconda2/lib/python2.7/site-packages/cvxpy/expressions/expression.pyc in cast_to_const (expr) /Users/mohameddaoudi/opt/anaconda2/lib/python2.7/site-packages/cvxpy/expressions/constants/constant.pyc in _init_ (self, value) 42 self.

T ,X ) ,Z1 ), "nuc" ) ) 22 23 #problem = cp.Problem(objective) /Users/mohameddaoudi/opt/anaconda2/lib/python2.7/site-packages/cvxpy/atoms/norm.pyc in norm (x, p, axis) 45 return normNuc (x ) 46 elif p = "fro" : -> 47 return pnorm (x, 2, axis ) 48 elif p = 2 : 49 if axis is None and x.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed